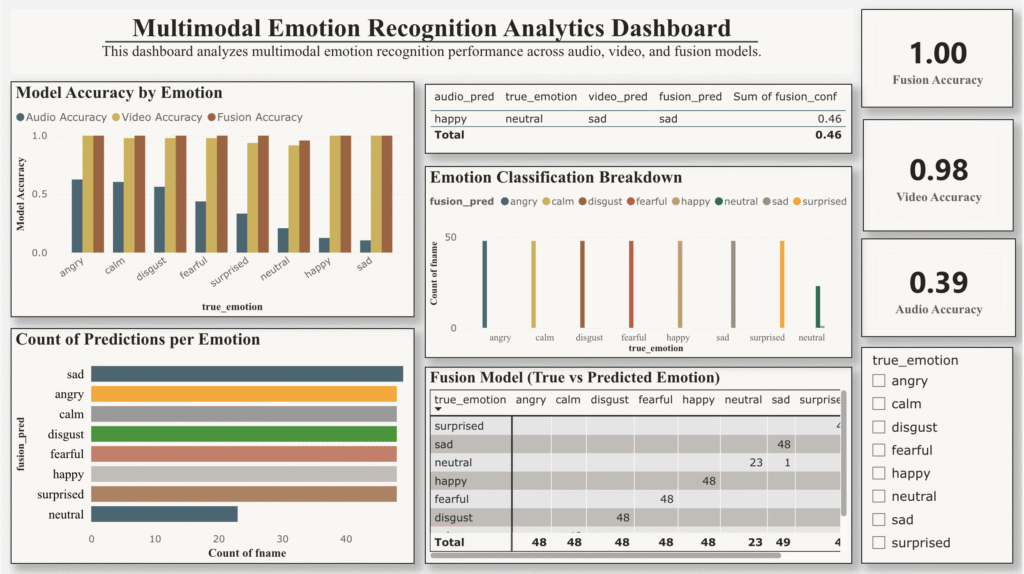

This project presents an end-to-end analytics and visualization pipeline for evaluating a multimodal emotion recognition system using predictions from audio, video, and fused audio-visual models. Instead of model training, the focus is on performance analysis, interpretability and decision-ready insights delivered through an interactive Power BI dashboard.

Using predictions derived from the RAVDESS benchmark dataset, the dashboard enables comparison across modalities, highlights misclassification patterns, and analyzes how model confidence relates to correctness. The result is a professional evaluation tool suitable for ML performance assessment, stakeholder reporting, and portfolio demonstration.

Key Highlights

- Compare accuracy across audio, video, and fusion models

- Analyze emotion-wise performance and misclassifications

- Explore confidence vs. correctness to assess model reliability

- Interactive Power BI dashboard with emotion-level filtering